It was a very short and quiet week of news. The Zscaler earnings review has already been sent. CrowdStrike, Rubrik, SentinelOne and a few other reviews are coming next week. I hope everyone celebrating had a wonderful Thanksgiving and has a great weekend.

Earnings Reviews from the Season:

- Datadog & Palo Alto Earnings Reviews.

- Zscaler Earnings Review.

- Sea Limited Earnings Review.

- On Holdings Earnings Review.

- Nu Holdings Earnings Review.

- The Trade Desk Earnings Review.

- Lemonade & Duolingo Earnings Reviews.

- Palantir & Hims Earnings Reviews.

- Cava Earnings Review.

- DraftKings Earnings Review.

- Microsoft & Cloudflare Earnings Reviews.

- Uber Earnings Review.

- Shopify & Coupang Earnings Reviews.

- Meta Earnings Review.

- Alphabet Earnings Review.

- Apple, ServiceNow & Starbucks Earnings Reviews.

- Amazon & Mercado Libre Earnings Reviews.

- PayPal Earnings Review.

- Tesla Earnings Review.

- SoFi Earnings Review.

- Netflix Earnings Review.

- Taiwan Semi Earnings Review.

1. Alphabet (GOOGL) – TPU News

TPU = Tensor Processing Unit. This is an Application-Specific Integrated Circuit (ASIC; custom chip for a specific use case) designed by Google. These are high-performance compute (HPC) chips purpose-built for machine learning. Partners include Broadcom and now Mediatek for design, as well as Taiwan Semi as the manufacturer.

News:

There were two interesting TPU-related pieces of news this week, following the world’s revelation that Gemini 3 (the world’s top-ranked AI model) is entirely built on these chips – just like its predecessors.

First, Google announced a multi-year, multi-million Ironwood TPU deal with NATO. This will give that organization access to TPU-related compute within Google Cloud infrastructure. Alphabet has been renting capacity like this for years, with this and the recent Anthropic TPU deal both signs of strong rental demand.

While the second piece of news is still just a rumor, I think it’s even more exciting. Per The Information, Meta is exploring a multi-billion TPU purchase of their own. The most interesting part is that Google will potentially outright sell these assets to Meta for their own data centers. That would mark a stark extension of Ironwood’s addressable market and would pin Alphabet more directly against GPU Nvidia and AMD. We've known they're open to selling these chips outright for a while, but this is clearest sign of this objective bearing fruit.

There has been a common view that TPUs were too custom and purpose-built to be useful in environments outside of Google Cloud. This shows that another computing giant is seriously considering using these in its entirely different data center, indicating that these assets are more malleable than many think. The malleability barrier was thought to be the main reason why news like this could never happen and why generalist GPU leaders would fetch most of the demand.

Nvidia still General-Purpose King:

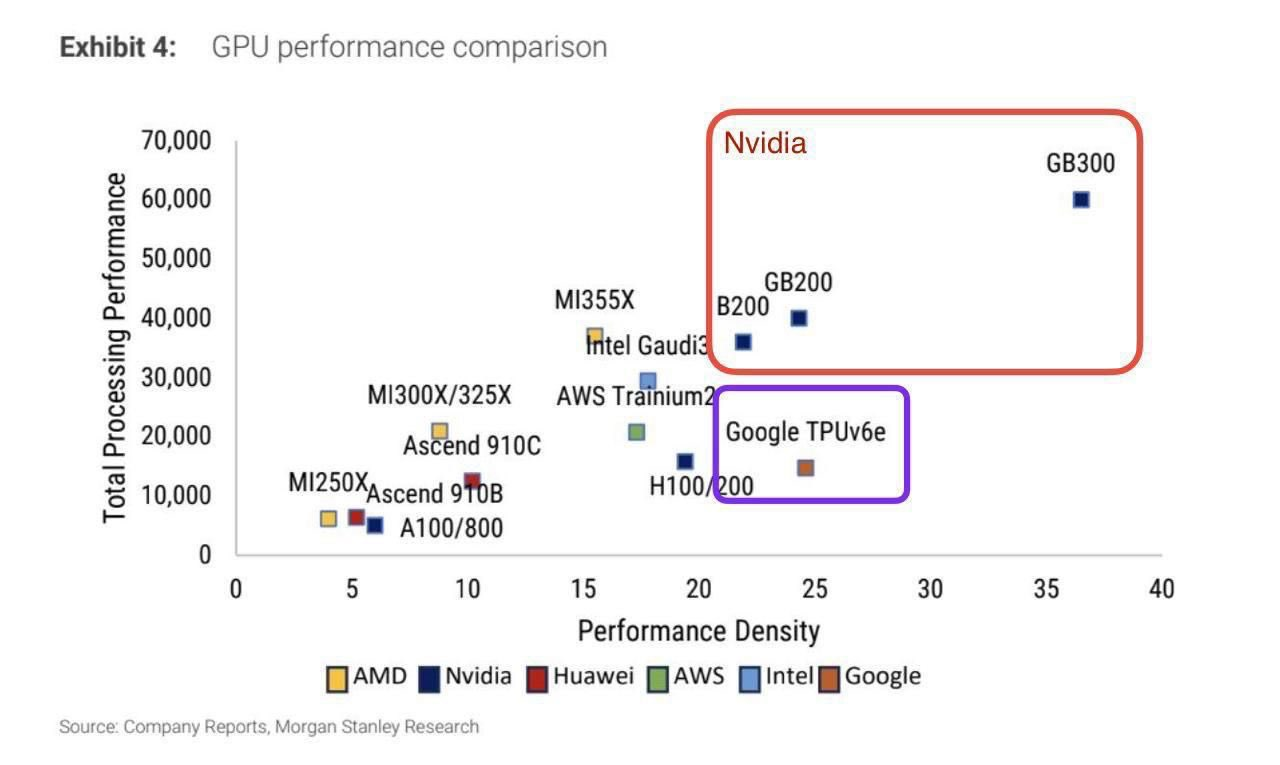

Both pieces of news inspire the questions “how many clusters can be TPU-based instead of GPU-based and what does that mean for the players involved?” To start, Nvidia’s Grace-Blackwell platform is still the best in the world. Its performance is best-in-class and its FLOPS (Floating point operations per second; unit of computational throughput) lead is also large. But still, Grace-Blackwell is the best general-purpose high-performance compute (HPC) platform in existence.

Nvidia GPUs do way more. They’re more versatile across other use cases besides model training and inference, which are really the only things TPUs are good at. Nvidia’s software ecosystem (Cuda) is also considered more scaled and better than Alphabet’s. Nvidia’s Cuda is also a better ecosystem than Google’s python-based JAX/XLA offering, as it’s more scaled, more malleable across different data center environments and offers what many view as better integrations with popular machine learning tools like PyTorch. Google is getting there, but Nvidia is still ahead in this regard. That reality allows Nvidia to deliver more performance gains within a specific GPU model than Google will with TPUs. Nvidia’s generalist ecosystem functions as an army of optimizers dedicated to extracting more value from existing hardware. That’s so important & something Alphabet doesn’t match with its own software. That matters a lot for depreciation schedules and delaying expensive hardware antiquation. It will inspire more GPU demand.

TPUs are a Real Alternative for Some Use Cases:

But? TPUs doing a lot less can be seen as an edge too. Fewer use cases mean a tighter focus on large scale machine learning. They are AI specialists that can allocate 90% of a capacity to AI. They don’t need to dedicate much space in the chip form factor for other workflows like graphics. They don’t need to know how to do other things. They can specialize in machine learning & be a complete beginner at everything else GPUs can do. That singular and cohesive TPU purpose also makes it a lot easier to connect much larger clusters of TPUs vs. GPUs, adding to potential efficiency and density advantages. TPUs also don’t transfer data in and out of these chips impulsively or sporadically, which creates a layer of cost visibility across workloads. Simplicity via TPUs running the same kinds of workloads builds on that cost efficiency, as data can be more easily translated and transferred across chips.

- Google TPUs transfer data via steady and predictable flow. That enhances cost efficiency and relies on these TPUs only running a very small set of workload types. This is called a Systolic Array System ( lingo not important for investors).

- Nvidia GPUs unlock random and sporadic data access to be shared across more diverse workloads than TPUs offer. This is more expensive, but enables a much broader range of use cases outside of just machine learning.

One more note on elite TPU efficiency. Alphabet’s ability to utilize available FLOPS is better (the lingo is Model FLOPS Utilization or MFU), which makes the perceived lead for Nvidia vs. Google larger than it actually is. SemiAnalysis wrote about that this week. That’s the beauty of Alphabet’s end-to-end full-stack AI approach. They can work to make sure all of this hardware is working perfectly for their own needs and the needs of a few giant customers like Meta and Anthropic. It’s just easier to make great products for a smaller set of diverse needs, which paves the way for Alphabet being more efficient than alternatives for some inference workloads. Some analysts believe this lead can extend to more than 2X for a subset of models and tasks. Certainly not for everything... but that should be enough to win some market share, especially if these advantages extend to other cloud environments.

Between the efficient data transfer processes and less complex hardware needs thanks to needing to service a very small number of HPC use cases (rather than all of them) makes TPUs more cost effective for some specific training and inference workloads.

Company Implications:

So what does this mean for companies? First, I think Alphabet will continue exploring external sales of these products to giants like Meta. Meta is a unique case, in that they are a mega-cap without a public cloud business. That means Alphabet selling them these chips doesn’t erode their cloud positioning like it would if they sold to Amazon or Microsoft. While sales to those vendors are possible, I’d imagine Alphabet will focus on non-public cloud vendors for this business. I am confident that Alphabet won’t come close to Nvidia’s 65% EBIT margin on these sales, as their chips are not as powerful or diverse. But? That doesn’t matter. Any incremental EBIT for Google makes it an incremental positive for overall profit dollar generation. They do not have an existing business with sky-high margins to protect. This can be a very successful sales initiative, even operating it at a 10% EBIT margin and vastly undercutting every other player in the market. That capability paired with Ironwood’s highly respectable performance and its demonstrated ability to build the best model on the planet bodes very well for Alphabet’s presence growing in this space. They’ve emerged as a highly capable ASIC vendor.

As already mentioned, Broadcom and Taiwan Semiconductor are both deeply involved, but what about GPU designers like Nvidia and AMD? For now, I also think those 2 are entirely fine. The world is so starved for more compute amid the AI infrastructure boom that one more player emerging probably won’t be noticeable for near-term GPU-player results. At the same time, the supply-demand mismatch will not be permanent. The compute supply will catch up eventually, and that will matter eventually.

Whenever that happens, more perfect competition and a larger number of serviceable options will inherently weigh on pricing power, which will hit demand and margins. The level of impact will depend on the health of cycle demand and how aggressive Alphabet gets in selling these to external customers, but I don’t think that level will prove immaterial. None of this is to say Nvidia is going to die or come close to a fate like that. But? I don’t think their next few years of results will look close to as good as the incredible, masterful, historic results we’ve seen since Hopper. The conversation for AMD is basically the same, perhaps with a bit more competitive concern considering the gross performance gap between Ironwood and Rubin is larger than Ironwood and AMD’s MI400 family.

And for the remaining public cloud vendors, Alphabet just created an even larger sense of urgency for them to build great chips internally.

They see their competitor sidestepping a need to pay a vendor like Nvidia their sky-high margin thanks to Google Cloud’s better vertical integration. Cloud competitors know winning against Gemini and Google Cloud will be difficult if that cost disadvantage is durable. That’s why Microsoft and OpenAI are now working on their own hardware and why Amazon has been for years as well. They all feel the heat. Cloud giants don’t want to pay a fortune for chips while a direct competitor can make their own. They don’t want to pay that direct competitor (Google) for chips either. Others surely see this cost edge Google has built and they know that edge means denser, more efficient and higher-quality models and agents for Alphabet than the others.

- The TPU business enjoying strong momentum supports its core cloud business too. Want to run machine learning workloads at half the cost? Well… you need TPUs & so you need Google Cloud. Being so cost effective for AI gives this business another key selling point and should help it win more migrations.

Conclusion:

No matter how this shakes out, it looks like Google will be in great shape. And furthermore, if we do see chip cost deflation stemming from more perfect competition, that is great news for every single AI app layer participant. Cheaper compute means budgets can get stretched further and product advancement can accelerate. Broadcom and TSM are fine – they are big pieces of TPU manufacturing. Nvidia and AMD are also probably fine in the short-to-mid term as long as chip supply/demand mismatch remains in place. There’s so much demand right now that another capable vendor will be masked by that overall momentum. Companies like Microsoft think that won’t happen for at least another 3 quarters. After that, these GPU leaders could see incremental margin and demand pressure as TPUs take some demand for a specific subset of machine learning workloads.

2. Headlines

Robinhood is evolving their prediction market structure to directly offer this product through a new joint venture (JV) with Susquehanna. This should give them more flexibility to offer for betting variety. In other prediction market news, a federal judge removed prediction market protections, allowing states to consider sports prediction markets as gambling. This allowed Nevada to issue an aggressive statement to operators telling them to stop operating. Robinhood had to (at least temporarily) shut operations down in Nevada because of this, and other states are now free to follow suit if they’d like to do so. Finally, DraftKings launched its Spanish language app.

Amazon is investing $50B in AWS capacity and tooling for the U.S. Government.

We saw a strong GDP number out of Argentina for September. Growth was 5% vs. 1.9% expected. That’s good news for Mercado Libre’s highest-margin, highest-market share country. This has either carried their performance or held results back in recent quarters. Not monumentally important for the multi-year investment case, but worth noting.

Uber added PacSun, Camping World, Pet Valu and Lush to its Grocery & Retail (G&R) segment and debuted its WeRide Robotaxi Service in Abu Dhabi.

Shopify got a letter from 25 state attorneys general asking them to stop allowing merchants to sell illegal e-cigarette products. I fully expect them to comply and I don't see this having a material impact on sales. We have no available data on % of GMV from e-cigarettes, but I can't imagine removing the illegal subsection of that likely small bucket will be a material negative.

3. Macro

Inflation:

- The Producer Price Index (PPI) for September was 0.3% vs. 0.3% expected and -0.1% last month.

Consumer & Employment:

- Core Retail Sales for September rose by 0.3% vs. 0.3% expected and 0.6% last month.

- Retail Sales for September rose by 0.2% vs. 0.4% expected and 0.6% last month.

- Conference Board Consumer Confidence for November was 88.7 vs. 93.5 expected and 95.5 last month.

- Initial Jobless Claims were 216,000 vs. 226,000 expected and 222,000 last report.

Output:

- The Chicago Purchasing Managers Index (PMI) for November was 36.3 vs. 44.3 expected and 43.8 last month.