Table of Contents

In case you missed it:

- Nu & Cava Earnings Reviews.

- Axon & Mercado Libre Earnings Reviews.

- Cloudflare Earnings Review.

- Robinhood & Shopify Earnings Reviews.

- Datadog Earnings Review.

- Palantir Earnings Review.

- AMD & PayPal Earnings Reviews.

- Alphabet & Uber Earnings Reviews.

- Hims Earnings Review.

- Amazon Earnings Review.

- Axon Deep Dive.

- My Current Portfolio & Performance.

- Access 8 other reviews from earlier in the earnings season.

a. Nvidia 101

Nvidia designs semiconductors for data center, gaming and other use cases. It’s considered the technology leader in chips meant for accelerated compute and generative AI (GenAI) use cases. While that’s where it specializes, it does a lot more. Its toolkit includes chips, servers, switches, networking, AI models and cutting-edge software to optimize the hardware it provides. Owning more pieces of GenAI infrastructure means opportunity for more software-based product optimization.

The following items are important acronyms and definitions to know for this company:

Chips:

- GPU: Graphics Processing Unit. This is an electronic circuit used to process visual information and data.

- CPU: Central Processing Unit. This is a different type of electronic circuit that carries out tasks/assignments and data processing from applications. Teachers will often call this the “computer’s brain.”

- Blackwell: Nvidia’s modern GPU architecture designed for accelerated compute and GenAI. It replaces Hopper. Rubin is the next platform after Blackwell. Then Feynman.

- Grace: Nvidia’s new CPU architecture that is designed for accelerated compute and GenAI.

- GB300: Its Grace Blackwell Superchip with Nvidia's latest “Blackwell Ultra” GPUs and ARM Holdings tech.

Connectivity:

- NVLink Switches: Designed to aggregate and connect (or “scale-up”) Nvidia GPUs within one or a couple of server racks. This creates a sort of “mega-GPU.” GPU connections power greater efficiency, performance and computing scale (so cost advantages).

- The newest system allows for 576 total GPUs to be connected.

- InfiniBand: Standardized interconnectivity tech providing an ultra-low latency computing network. This can connect larger batches of server racks for more scalability (or “scale-out”).

- Nvidia Spectrum X: Similar to InfiniBand functionality and performance but Ethernet-based.

- Ethernet is vital for connecting larger compute clusters.

- All 3 of these products are driving strong growth in this budding segment.

NVLink Fusion allows companies to build “semi-custom” AI infrastructure with Nvidia and its integration ecosystem. GPUs are general-purpose in nature. They’re not granularly designed for every single niche use case like an Application-Specific Integrated Circuit (ASIC). This can help Nvidia capture more of that demand by partnering with Marvell and a few other partners to more easily emulate purpose-built hardware.

The Nvidia GB300 NVLink72 is its rack-scale computing system. Rack scale means the entire server rack powers computation rather than a single server. Because this includes Blackwell chips and NVLink switches, it’s partially in the compute bucket and partially in networking. This aggregated product is the core revenue driver right now.

Software, Models & More:

- NeMo: Guided step-functions to build granular GenAI models for client-specific needs. It’s a standardized environment for model creation.

- CUDA: Nvidia-designed computing and program-writing platform purpose-built for Nvidia GPU optimizations. CUDA helps power things like Nvidia Inference Microservices (NIM), which guide the deployment of GenAI models (after NeMo helps build them).

- NIMs help “run CUDA everywhere” — in both on-premise and hosted cloud environments.

- GenAI Model Training: One of two key layers to model development. This seasons a model by feeding it specific data.

- GenAI Model Inference: The second key layer to model development. This pushes trained models to create new insights and uncover new, related patterns. It connects data dots that we didn’t realize were related. Training comes first. Inference comes second… third… fourth etc.

- Omniverse is its digital twin-building platform. This enables deep testing and learning in a zero-stakes, simulated environment, turbocharging experimentation and progress.

- Cosmos is its suite of world foundation models and apps for physical AI. It’s grounded in laws of physics and everything needed to effectively understand the physical world.

- Thor is the name of its platform for robotics and physical AI.

DGX: Nvidia’s full-stack platform combining its chipsets and software services.

b. Key Points

- Strong results for its data center segment.

- Rubin is on track to ramp later this year.

- Guidance now includes stock comp as a non-GAAP expense.

- Expects to exceed previously communicated $500B Blackwell + Rubin revenue target.

c. demand

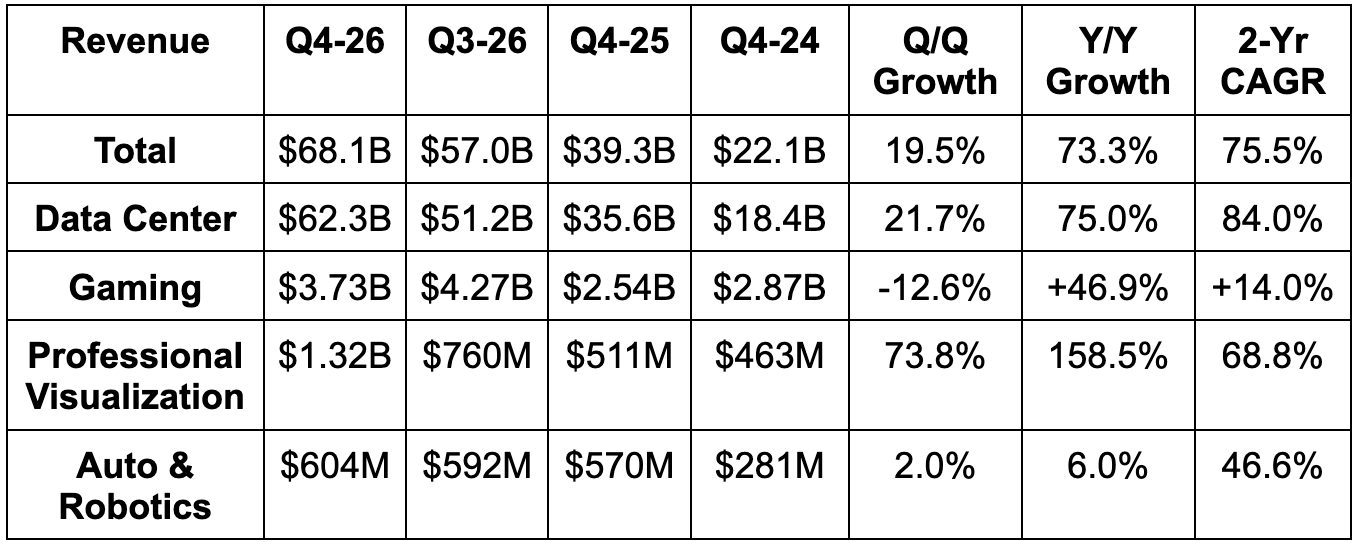

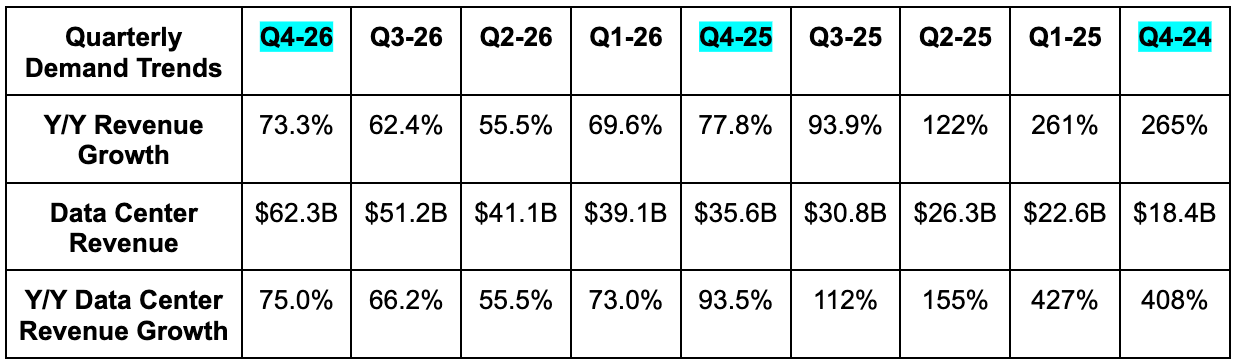

- Beat revenue estimates by 2.9% and beat guidance by 4.7%. There is no revenue from China in these results.

- Beat data center revenue estimates by 3.7%.

- Beat Networking revenue estimates by 22%. Networking revenue rose by 263% Y/Y vs. 162% last quarter.

- Slightly missed Compute revenue estimates. Compute revenue rose by 58% Y/Y vs. 56% last quarter.

- Annual networking revenue has now grown by 10x in 5 years since NVDA bought Mellanox.

- Beat gaming revenue estimates by 6%.

- Beat professional visualization revenue estimates by 71%. This was the first quarter in which the segment crossed $1B in revenue.

- Missed auto revenue estimates by 6%.

As you would expect, strength across Blackwell, NVLink, SpectrumX, CUDA, and its entire data center focused product suite powered the outperformance for the quarter. It has now delivered Blackwell capacity equal to nine gigawatts of compute for customers and has enjoyed a very strong Blackwell Ultra ramp, which is the next iteration of this GPU platform. On the networking side, scale-up (NVLink) and scale-out (mostly SpectrumX) demand were both exceedingly healthy.

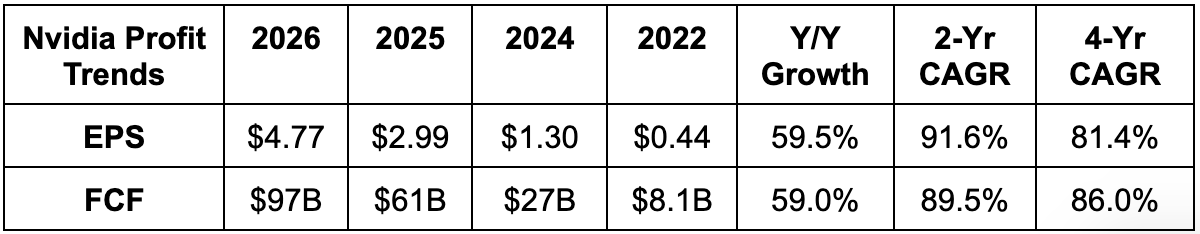

d. Profits & Margins

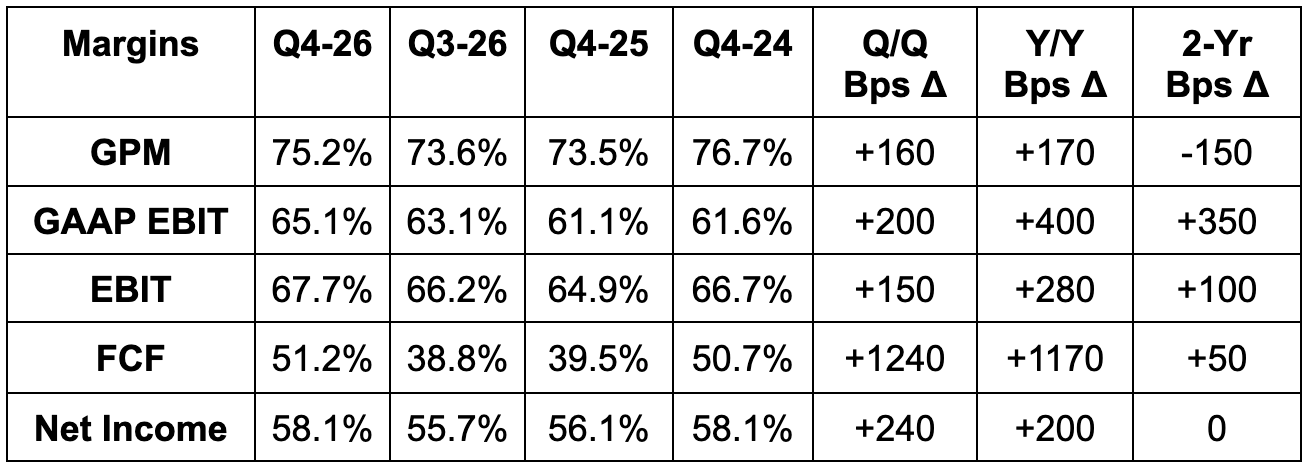

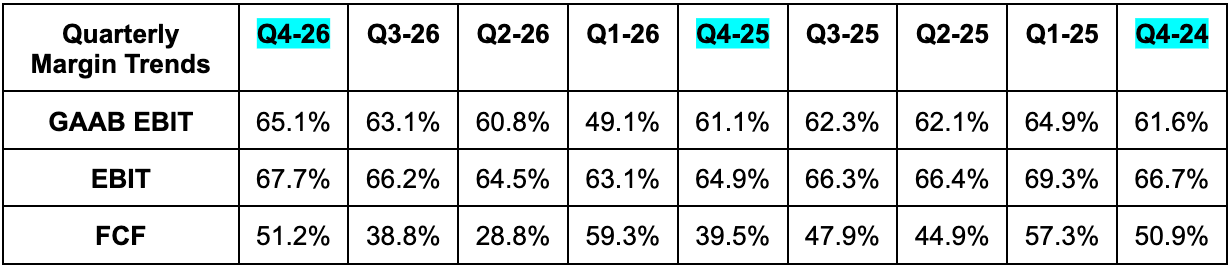

- Slightly beat GPM estimates & guidance.

- Beat FCF estimates by 3%.

- Beat EBIT estimates by 3.1% and beat guidance by 5.3%.

- Beat $1.54 EPS estimates by $0.08. This was helped by a 15.4% tax rate vs. 17% expected. Without this help, EPS would have been roughly in line with expectations.

GPM strength was helped by lower provisions this quarter compared to the Y/Y period. Furthermore, Blackwell continues to ramp and enjoy better economies of scale, which is diminishing the margin dilution associated with that growth. Going forward, NVDA sees GPM sustainability and pricing power amid all of the memory inflation as based mainly on its ability to keep delivering GPUs that perform better than anyone else's. Product superiority drives demand, and pricing power follows. This is the only way NVDA will be able to maintain an incredible 68% EBIT margin, which, for context, is much better than AMD's gross margin.

GAAP and non-GAAP operating expenses rose by 45% Y/Y and 51% Y/Y, respectively. This was powered by compensation growth related to more headcount, as well as rising compute infrastructure costs via their explosive growth.

e. Balance Sheet

- $62.6B in cash & equivalents; $22.2B in equity securities.

- Inventory rose by 112% Y/Y; $8.5B in debt.

- Diluted share count fell by 1.2% Y/Y.

- Days of sales outstanding (DSO) fell from 53 to 51 Q/Q. This was based on collection timing.

The inventory growth is certainly notable. This is in response to what NVIDIA views as strong demand signals and heightened demand visibility that is allowing NVDA to store more inventory confidently. This confidence stems from discussions with customers and rising purchase commitments, but also encouraging utilization rates for older models, pointing to long depreciation schedules, which should support demand for older platforms and reduce the risk of inventory waste. More on this later. They are purchasing inventory to service demand further out than they normally would, and these are the reasons why.

f. Guidance & Valuation

- Q1 revenue guidance is 8.3% ahead of estimates. Guidance does not include any shipments to China.

- Q1 75% GPM guidance met estimates.

- Q1 EBIT guidance is 5% ahead of estimates. Nvidia is now including stock comp in non-GAAP EBIT guidance. That could explain why the profit raise was smaller than the demand raise.

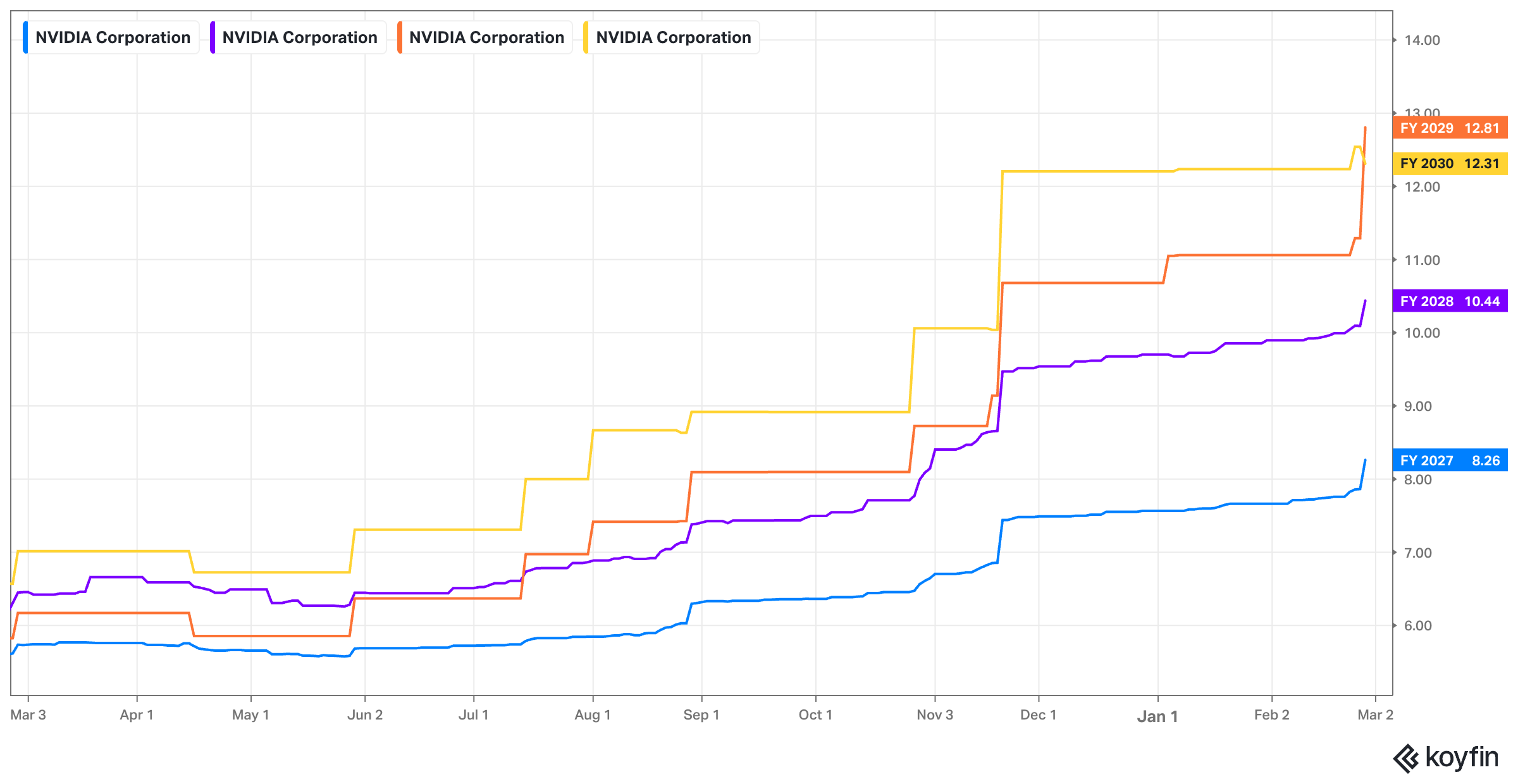

For the full year, NVIDIA now expects Blackwell and Rubin revenue to come in better than previously expected. They now expect more than the $500B in combined 2025 and 2026 Blackwell + Rubin revenue goal previously communicated. Furthermore, they remain comfortable with a mid-70% GPM for the year. And finally, they continue to expect low 40% Y/Y operating expense growth to continue supporting demand.

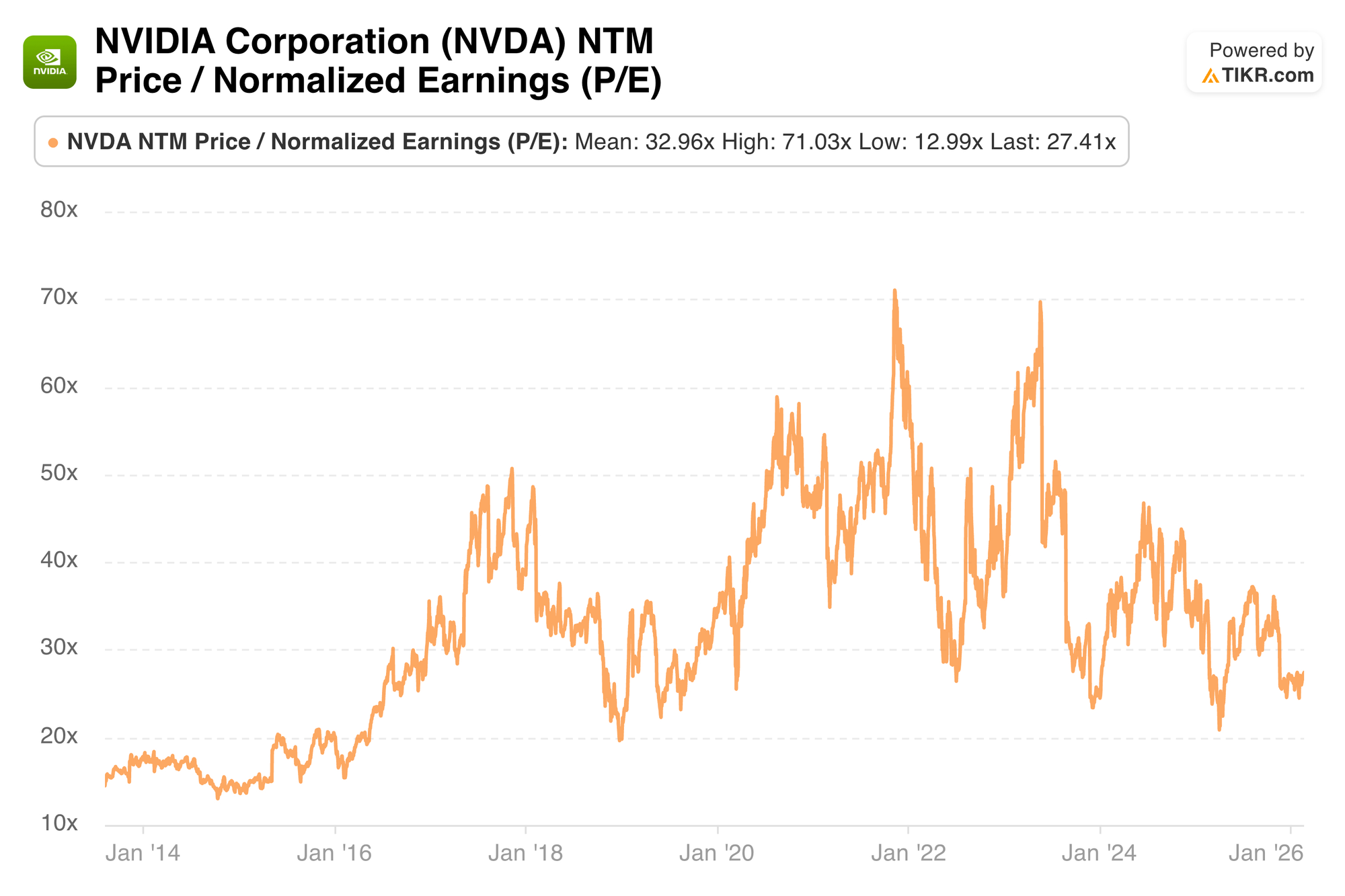

Nvidia trades for 25x forward EPS. EPS is expected to grow by 65% Y/Y this year and by 28% next year.

g. Call

Data Center Runway – Performance & Pace of Progress:

World-class GPU performance and rapid pace of improvement are two main ingredients for gauging AI supercycle longevity. If Nvidia's products are better than everyone else's, it will likely continue to garner the lion's share of GPU demand. If a GPU is more efficient than the competition, then a company can spend the same amount of dollars on even more capacity to create faster innovation, bigger revenue growth and better products. Enterprises around the world are obsessed with adding high-performance compute capacity in the most efficient manner possible. They know unlocking more revenue and product enhancement opportunities with their finite budgets will be the difference between them durably standing out vs. the competition… or not. Per leadership, NVIDIA's lowest cost per token dynamics and best compute productivity are clear and becoming more true with time.

And if Nvidia delivers new iterations far better than their previous leading models? Companies will remain motivated to upgrade, knowing the benefits will justify the costs. Signs there are excellent. Blackwell Ultra boasts 50x performance and 35x cost savings advantages vs. NVIDIA's Hopper platform. From there, the new Rubin GPU platform cuts inference costs by 10x compared to NVIDIA Blackwell, with several customers already enjoying these advantages in early testing. Amazingly, this platform will allow customers to run Mixture of Experts (MoE) models with ¼ of the GPUs needed to do the same work as before. That’s just one year of innovating! They'll continue rapidly progressing and sticking to their one-year platform introduction cadence, making it very hard for anyone to catch them.

NVDA’s massive $20B R&D budget gives it more than enough firepower to keep investing in improvements, and its demonstrated progress (faster than anyone else) bodes well for them staying ahead.

- Rubin is on track to ramp shipments in the second half of the year.

Data Center Runway – Useful Life:

Signs of the 5-6 year depreciation schedules for NVIDIA GPUs being fair remain clear. That's why aging Hopper and Ampere platforms continue to boast strong demand and cloud utilization rates years after launching. As a reminder, NVIDIA leadership even told us last quarter that Ampere remained 100% utilized six years into its life cycle. If that doesn't support an argument that a six-year depreciation schedule is fair, I don't know what will. Clear as day.

How is NVDA able to preserve strong interest in increasingly dated offerings, as its pace of progress remains rapid and new offerings stay this promising? Software. CUDA continues to serve as a compute performance optimization engine with an army of developers dedicated to making sure this compute ecosystem is running as optimally as possible. And encouragingly, optimization work on one platform delivers value to every platform. These learnings can be extrapolated and borrowed across Hopper, Blackwell, Rubin, and all future lineups. That means the work is especially productive and especially valuable, which naturally drives more motivation to keep boosting NVIDIA GPU performance specifically. They won the vast majority of GPU market share by having better products, and now they're making sure software perfectly complements this dominant position, to stay ahead on software and create stickier customer relationships in the process.

- Software optimization work is what makes highly compute-intensive inference workloads rational to run, and is what makes gigantic CapEx budgets for tech giants rational to offer.

Data Center Runway Inning & Visibility:

Nvidia is adamant that customers are already delivering strong returns on GenAI investments. Improving ad targeting, content personalization, customer service and more are all helping clients turn costs into profitable growth. We hear Meta talking about a boost to ad monetization or customer engagement every single quarter, and that's because of the investments they're making in AI. And now, Huang sees the proliferation of agentic AI in enterprises beginning. Whether that's due to Anthropic’s recent agentic tool introductions, OpenClaw, OpenAI's Codex, or other tools, AI automation and monetization are beginning to move beyond chatbot interfaces and into the realm of real work. The potential has been there, but the familiar apps and interfaces needed to get humans comfortable with embracing these tools have not been. That's now changing, which is vital for accelerating adoption. The vast majority of users do not want to interact with a terminal on their PC. They want a pretty front-end interface that makes it obvious how to extract value from these tools.

Strong GenAI returns paired with promising agentic AI prospects illustrate why hyperscalers are spending so aggressively on CapEx for 2026. As leadership told us constantly throughout the call, the more compute you have, the more revenue you will generate. Furthermore, the tokens (which are the outputs AI delivers that leads to revenue) are already profitable within GenAI. There's no reason to believe that won't eventually be the case for agentic AI, which should support more durable demand as costs are justified by returns.

As agentic AI explodes in popularity, NVDA expects that momentum to be joined by a proliferation in physical AI, as autonomous vehicle platforms and humanoid robot concepts take form.

In terms of demand visibility, NVIDIA is spending so aggressively on inventory because multi-quarter demand signals look so strong. They expect to remain supply constrained despite these hefty investments, as they simply cannot keep up with customer interest, even at this stage of the cycle. Specifically, they think they have inventory and supply commitments secured into calendar 2027, which provides more visibility than we're used to for a business model like this one.

The team was asked if they really believe massive CapEx budgets for 2026 can keep growing in 2027 and beyond. These mega caps represent half of their entire revenue base, so their continued desire to boost CapEx is vital for NVDA's growth engine. The team doesn't seem worried because they believe all of these investments will naturally grow operating cash flow and create more room for CapEx. All I will say is that semiconductor executives are always charismatic and tend to play down the risks of cyclical tailwinds slowing down when we are in the fun parts of those cycles. They will not be first to say demand signals are weakening. They will talk up positive signs for as long as they possibly can. Right now, it's very easy to find and highlight those positive signs because they remain numerous.

Data Center Runway – Interesting Notes:

There was an interesting note on the networking side that I think is worth covering. NVIDIA is now openly integrating NVLink with Amazon's custom silicon. There's growing belief that custom chips are better suited for some hyper-specific machine learning and AI workload. NVDA's GPU architecture is better for far more workloads, but is more of an AI generalist vs. the AI specialists seen in ASICs. This is a great piece of news for NVDA's networking suite being configurable enough to work closely with custom chip designers. Nvidia is positioning itself to be a big piece of that.

Additionally, I think it's important to emphasize the importance of the tensor cores NVIDIA offers and runs within its GPUs. While GPUs are far more generalist in nature vs. Google's Tensor Processing Units or other custom chips, this helps remove that edge. It allows NVIDIA to run much more specialized workloads within the generalist compute framework to emulate the specialization that others can provide.

Data Center Ecosystem:

There were several partnership developments talked about during the quarter. NVDA is "thrilled" with its ongoing partnership with OpenAI and expects a new agreement to be in place soon. They are directly supporting Codex and tightening their relationship with this leading model provider. As recently covered, Meta and NVIDIA signed a multi-year partnership to expand that customer's usage of its chips and networking equipment. Next, the company invested $10b in Anthropic to help it scale Claude and planned agentic tools. Again, NVIDIA views these products as "the floodgates for enterprise AI adoption" and understandably wants to be as big of a piece of that as they can be. They certainly have the balance sheet to be making these investments. Outside of these big announcements, it bolstered its compute relationship with CoreWeave, announced new partnerships with Synopsys, joined the U.S. Department of Energy's Genesis mission (to support U.S. tech leadership), fortified relationships with Cadence, Siemens, and Synopsys, and added new relationships with manufacturing titans in India.

NVDA views its ecosystem and vast integration network as key differentiators vs. the field. They know they can help support these partners, deliver better products and services to shared customers. And? They also know directly working with these companies can raise the probability of that happening. As an added bonus, NVDA gets stickier and stickier as its role in supply chain perfection deepens.

- Multi-year cloud service agreements rose from $26B to $27B Q/Q. These are contracts Nvidia has with cloud vendors to provide services that support its own scaling. They clearly feel the need to vastly bolster their commitments, which is good news for the public cloud and the entire AI cycle.

- Sovereign AI business tripled Y/Y to $30B annually. The Netherlands, Canada, France, Singapore, and the UK were highlighted as standouts.

Other Segments:

Gaming and AI PC demand was driven by the Blackwell-powered RTX GPU platform purpose-built for this segment. They made a slew of product announcements that entail bolstering graphics quality, motion clarity, inference, latency, and overall performance. Well, demand here remains excellent. They do think supply constraints will be prevalent throughout the year and expect that to hold back growth. If things turn out better than expected, Y/Y growth for this bucket is attainable.

They also reviewed various product introductions surrounding their autonomous vehicle pursuit. To avoid being redundant, coverage of this news can be found in section 2 of this article. Physical AI is already up to a $6b+ run rate and that's merely the beginning. NVDA, through software and model introductions, has positioned itself as the technology layer for auto manufacturers racing to level four autonomy far faster than they can on their own. They've spearheaded partnerships and apps that vastly lower the costs and risks associated with pursuing this promising opportunity. That means they are pretty agnostic to who wins and will instead lean on their demand aggregator partner (Uber) to make sure it’s helping customers build autonomous fleets AND that these fleets are highly utilized.

h. Take

Great quarter. If a normal company delivered this kind of outperformance, there's a very good chance shares would have responded better. Nvidia has been cursed by its own success. They have trained analysts to expect monumentally amazing quarters every three months. And they've done so by delivering those monumentally amazing quarters consistently. I will not call this report anything but great. At the same time, when looking at the historic outperformance for semiconductors vs. software over the last few months, it's easy to arrive at the conclusion that a lot of the good from this cycle has been priced into shares. Even at the low earnings multiple, as that low multiple is reliant on margins remaining sky-high (which is tightly tied to cycle and demand health). If you're scratching your head wondering how these flawless financials can be shrugged off by Mr. Market, that could be why.

In terms of what matters going forward, it is all about cycle longevity. Signals right now are largely positive, and that should continue to support great results. At the same time, those signals will not remain uniformly positive forever. It's very important for shareholders to closely track any possible hints of cracks forming amid this super cycle. While things look amazing right now, I believe those hints are a when not if.